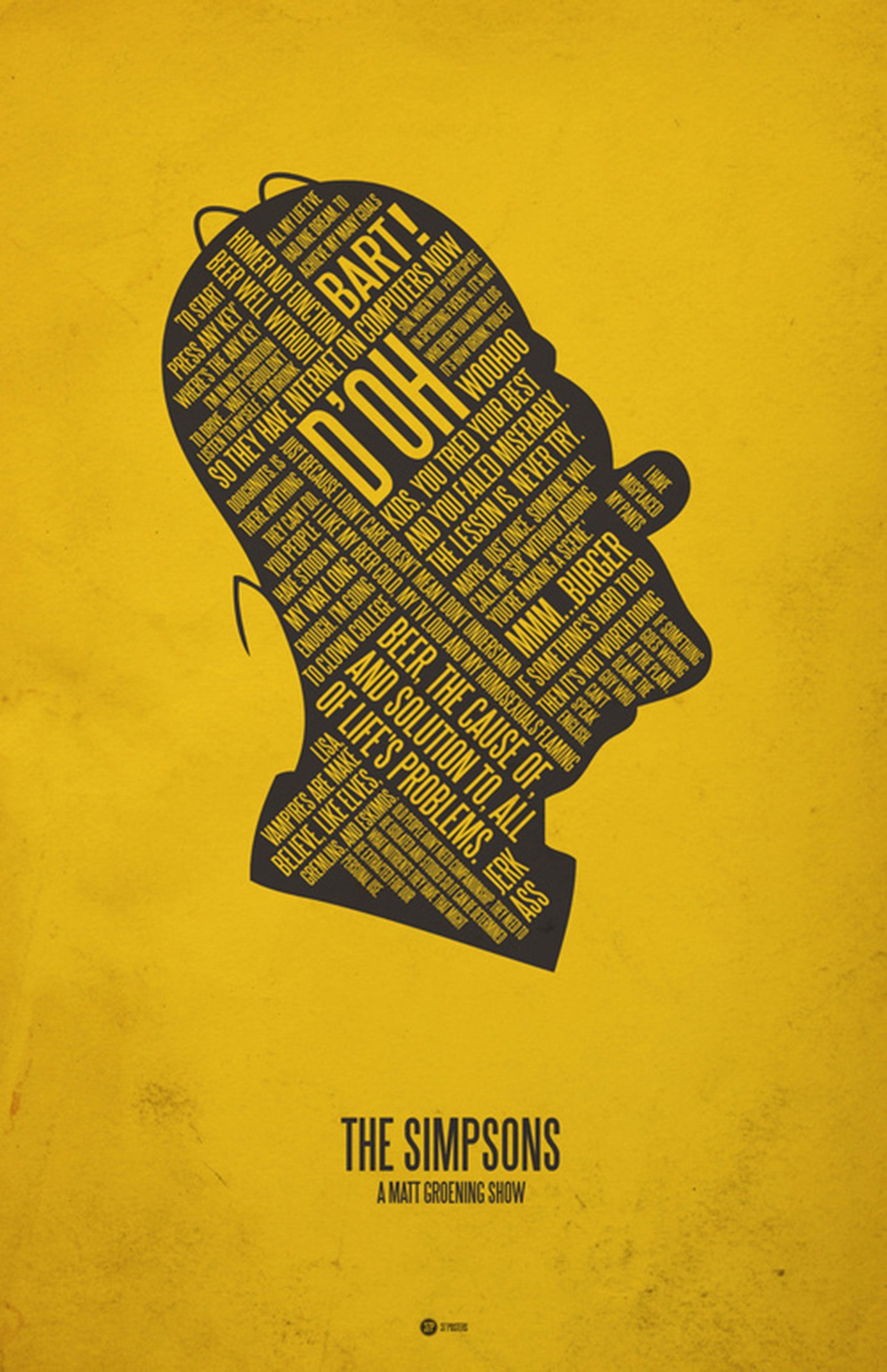

Different types of neural networks are becoming more and more, and they really help people to live and work. Some systems predict the weather, some - learn to put diagnoses, and part of the systems went into big business. AI, its weak form, is already able to analyze huge data arrays, finding dependencies between, at first glance, unrelated factors. But, of course, there are still many problems - artificial intelligence is not able to cope with the analysis of the behavior of such a “mysterious” cartoon character as Homer Simpson.

No, the system can identify some of its actions, but not all. At the same time, the neural network was trained on a large number of YouTube videos from the Simpsons. It is worth noting that DeepMind is not new to the development of various AI systems. For example, one of the developments of this company, previously part of Google, and now under the jurisdiction of Softbank,

was able to defeat world champions in the game of go.

DeepMind systems, as well as developments of this kind of other companies, are able to analyze huge amounts of information. Over time, the work of neural networks is becoming more sophisticated as they are self-learning. Whether it is face recognition or translation from English to Chinese and vice versa - the results are improving day by day. In order to teach their system, called Kinetics, to understand people's behavior, DeepMind employees “fed” over 300,000 videos from YouTube, teaching them to distinguish about 400 types of human actions.

“AI systems are now very good at recognizing various objects in images, but their weak side is working with video,” says representatives of DeepMind. “One of the main reasons is the lack of large samples of high-quality videos.”

In order to solve this problem, DeepMind employees decided to create their own

sample . For each of the 400 types of actions a person from YouTube "cut" at least 400 videos, lasting about 10 seconds. The result was one of the first high-quality and specialized data-sets intended for teaching AI. Of course, the company DeepMind, which formed this sample as a division of Google, was lucky, because Google (now - Alphabet holding) is the owner of YouTube. Accordingly, Deepmind employees probably had access to specialized tools for working with video service materials. Other companies in this regard will have to be more difficult, since finding high-quality high-quality videos for compiling a specialized data set is not as easy as it might seem.

The accuracy of identifying the various Kinetics seen in the commercials of people's actions was about 80%, which is not so small. True, this applies to ordinary videos where they play tennis, calm a crying child, make a weather forecast, etc. In the case of Homer Simpson, everything is more complicated, here the accuracy drops four times right down to 20%. It was difficult for the neural network to identify the actions of Homer, such as tossing a coin, combing nonexistent hair (those pair of hairs that remained did not count) and others.

Besides Homer, Kinetics is difficult to identify a dish or product if only a portion of it is shown. The half-eaten hamburger is already much less accurate than the whole. Problems arise in the event that the object is shown very small. According to the representative of DeepMind, in order to teach the neural network to correctly determine some kind of action with a high degree of accuracy, sometimes just a few videos are enough. But sometimes even a hundred does not help improve the accuracy of determining specific actions.

All this is a fairly well-known problem. For example,

earlier this same network had difficulties with identifying the faces of people belonging to certain ethnic groups. According to some experts, the algorithms that underlie Kinetics are able to determine the sex of a person according to certain features of

speech and texts .

The neural network from DeepMind is able to determine the sex of a person in the video (though not in all cases), as well as evaluate the “sexual balance” of a number of commercials. For example, the video with the shaving of mustache and beard is mainly male (who would be surprised), but the work with eyebrows or cheerleading is female. True, the problem with the recognition of gender still remains, here the developers have something to work on.

In the future, work on such systems will most likely make it possible to determine not only what people are doing on the video, but also the reason for their actions. For example, the neural network will be able to determine why a person exclaimed “oh”, explaining what caused this action. This requires substantial additional work and many, many data sets for training.

Perhaps, if you practice Kinetics better, then this system will learn to determine the actions of Homer Simpson. But who knows, this is a very unpredictable character. Will it work out?