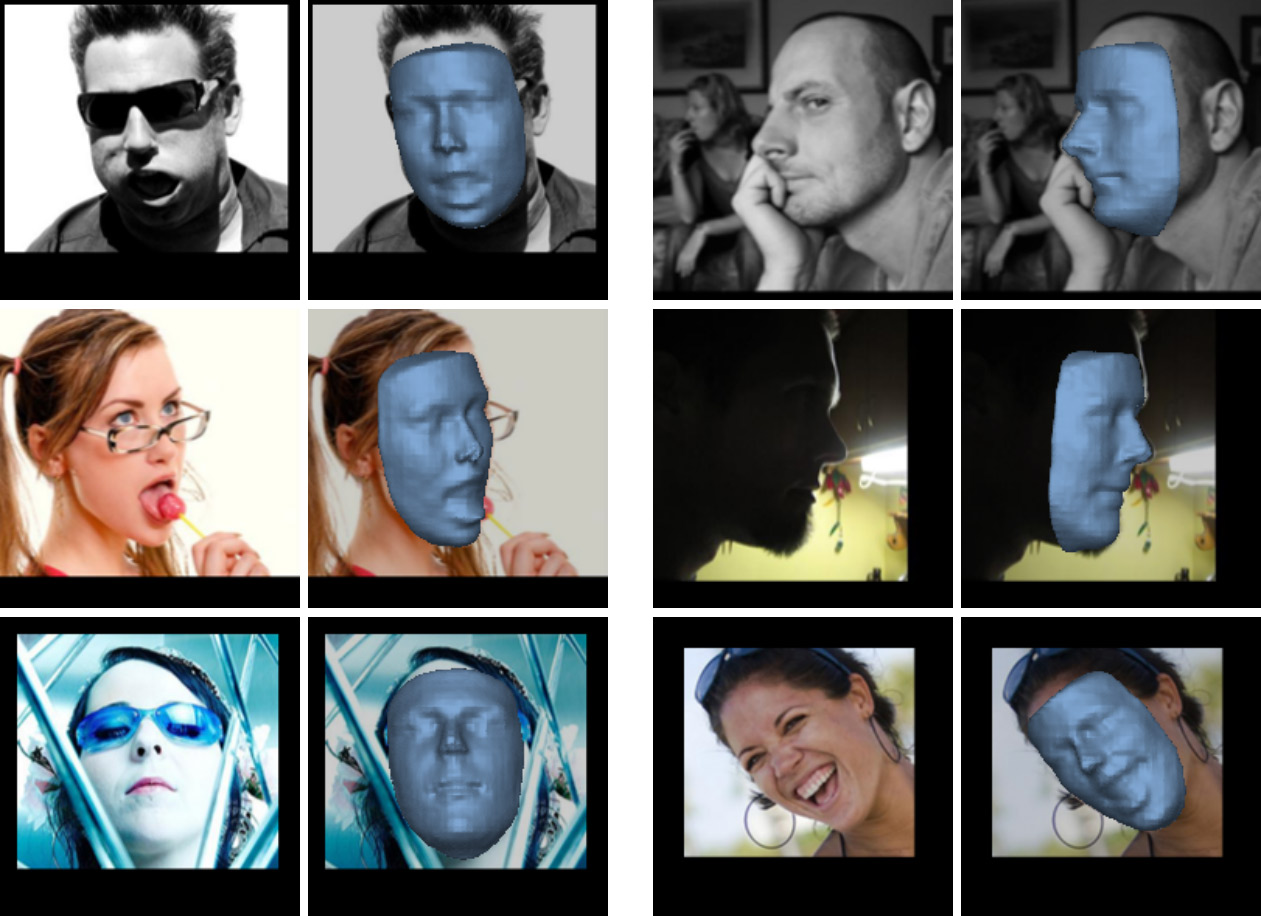

Some results of applying the VRN - Guided method on images from the AFLW2000-3D set

Some results of applying the VRN - Guided method on images from the AFLW2000-3D setThere are a number of startups on the Internet, including Russian ones, which are engaged in the restoration of 3D facial structures from photographs. For example,

VisionLabs with its Face.DJ application is able to perform 3D reconstruction on a single photo. Such a transformation (3D-modeling by photo) has a practical meaning. After creating a model, it is possible, for example, to change the hairstyle, try on glasses, grow a beard, etc. The technology can be used in systems for checking and recognizing faces.

But now the business of such startups is under threat: their work is easily performed by the new VRN (Volumetric Regression Network) neural network, which is

laid out in open access on GitHub . Directly to the site you can upload your own or any other photo - and the neural network will convert online in a few seconds (

demo ).

3D-reconstruction of 2D-photography is considered one of the fundamental problems of computer vision due to its extreme complexity. Most of the current systems require for work the presence of multiple photos of one person from different angles. According to the authors of the new scientific work, the existing models as a whole use a complex and inefficient data processing pipeline for building a model and fitting the result. As it turned out, the convolutional neural network performs work much easier and more efficiently than human-developed models and algorithms.

The illustrations show that the VRN neural network handles the processing of various facial expressions at an arbitrary angle relative to the camera lens - and works on a single photo. It does not interfere with foreign objects on the background of the face (glasses, chupa-chups).

The authors of this development under the leadership of Aaron Jackson (Aaron Jackson) from the University of Nottingham (UK) used a very simple approach to voxelization of images. It is devoid of many of the disadvantages inherent in other methods of 3D-reconstruction (including

3D Morphable Model - 3DMM ). In general, the essence of the new VRN method is shown in the illustration below.

(a) The proposed Volumetric Regression Network (VRN) accepts an RGB image as input data and directly returns a 3D volume output, completely missing the 3DMM fit. Each rectangle is a residual module of 256 signs. (b) The proposed architecture of VRN - Guided first defines a 2D projection on 3D landmarks and joins it with the original image. This stack is sent to the reconstruction network, which directly returns the volume. (c) The proposed VRN architecture - Multitask returns both a 3D face image and a set of sparse 3D landmarks.

(a) The proposed Volumetric Regression Network (VRN) accepts an RGB image as input data and directly returns a 3D volume output, completely missing the 3DMM fit. Each rectangle is a residual module of 256 signs. (b) The proposed architecture of VRN - Guided first defines a 2D projection on 3D landmarks and joins it with the original image. This stack is sent to the reconstruction network, which directly returns the volume. (c) The proposed VRN architecture - Multitask returns both a 3D face image and a set of sparse 3D landmarks.The authors of the study proved that a convolutional neural network (CNN) is able to successfully generate 3D models from photographs after training on a data set that contains photographs and corresponding 3D models. In this case, the training was conducted on 60,000 two-dimensional photographs of persons from the 300W base and the corresponding 3D grids obtained using 3DMM.

As it turned out, to produce a satisfactory result, the neural network does not need to use the 3DMM model and successfully performs a direct conversion from 2D to 3D.

The capacity of the model is proved on a large number of arbitrary photos that users upload via the Internet (

demo ). Apparently, the VRN method is superior to any other 3D-reconstruction system in a single photo. To date, the demo version has already processed more than 400,000 random photos from the Internet.

The neural network can be run locally on your own computer. The program code is

published on GitHub . It requires an installed scientific computing

framework Torch7 , a more or less productive Nvidia graphics processor with CUDA support. The program was tested in the Linux operating system and the author has no idea how it works under Windows. You will need more MATLAB, bash, ImageMagick, GNU awk, Python 2.7 (+ visvis, imageio, numpy).

A scientific article describing the neural network was

published on March 22, 2017 (arXiv: 1703.07834,

pdf ).