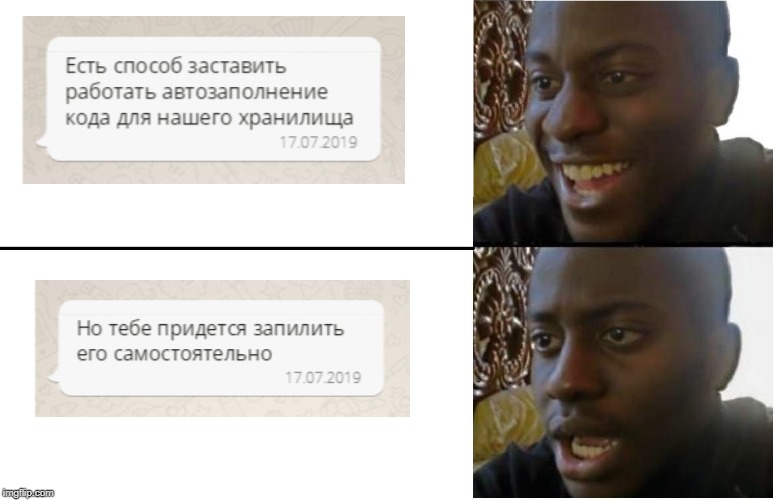

Do you like to repeat routine operations from time to time? Here I am. But each time in the SQL client when working with the Rostelecom repository, I had to register all the joins between the tables handles. And this despite the fact that in 90% of cases, the fields and conditions for joining tables coincided from query to query! It would seem that any SQL client has autocomplete functions, but it doesn’t always work for repositories: they rarely have unique constraint and foreign key in order to improve performance, and without this, the program won’t know how entities are related and what it can do for you to offer.

Having gone through denial, anger, bargaining, depression, and approaching acceptance, I decided - why not try to implement auto-completion with blackjack myself and as it should? I use the dbeaver client, written in java, it has an open source community version. A simple plan has ripened:

- Find autocomplete classes in source code

- Reorient them to work with external metadata and pull out information about joins from there

- ??????

- PROFIT

I quickly figured out the first item - I found a request for adjusting autofill in the bugtracker and found the SQLCompletionAnalyzer class in the related commit. I looked at the code - what I need. It remains to rewrite it so that everything works. I waited for a free evening and began to think through the implementation. Table linking rules (metadata) decided to lead in json. I had no practical experience with this format and the current task was seen as an opportunity to correct this omission.

To work with json, I decided to use the

json-simple library from Google. Here surprises began. As it turned out, dbeaver, as a tru-application, is written on the eclipse platform using the OSGi framework. For experienced developers, this thing gives the convenience of dependency management, but for me it was more like dark magic, which I was clearly not ready for: as usual, I register the import of the classes I need from the json-simple library in the header of the edited class, I specify it in pom. xml, after which the project categorically refuses to properly assemble and fails with errors.

As a result, we managed to fix the assembly errors: I registered the library not in pom.xml, but in the manifest.mf manifest, as required by OSGI, while specifying it as import-package. Not the most beautiful solution, but it works. Then the next surprise appeared. If you are developing in intellij idea, you can’t just start and debug your project based on the eclipse platform: an inexperienced developer should suffer no less than an analyst without autocompletion of requests. The beaver developers themselves came to the rescue, indicating in the wiki all the dances with a tambourine that need to be done. The most annoying thing is that even after all these squats, the project did not want to run in debug with the json library connected via import-package (despite the fact that it was still successfully assembled into the finished product).

By that time, I managed to feel the inconvenience of using json for my task - after all, the metadata was supposed to be edited manually, and for this the xml format is better suited. The second argument in favor of xml was the presence in the JDK of all the necessary classes, which made it possible to stop fighting with an external library. With great pleasure I transferred all the metadata from json to xml and proceeded to edit the autocomplete logic.

Metadata example<?xml version="1.0" encoding="UTF-8" standalone="yes"?> <tableRelations> <tableRelation> <leftTable>dim_account</leftTable> <rightTable>dim_partner</rightTable> <joinColumnPair leftColumn="partner_key" rightColumn="partner_key"/> <joinColumnPair leftColumn="src_id" rightColumn="src_id"/> </tableRelation> <tableRelation> <leftTable>dim_account</leftTable> <rightTable>dim_branch</rightTable> <joinColumnPair leftColumn="src_id" rightColumn="src_id"/> <joinColumnPair leftColumn="branch_key" rightColumn="branch_key"/> </tableRelation> </tableRelations>

As a result, I

made changes to the SQLUtils and SQLCompletionAnalyzer classes. The idea is this: if the program failed to find suitable auto-complete sentences according to the basic logic, then it checks for possible joins using an external xml file. The file itself contains pairs of tables indicating the fields by which these tables need to be linked. Restrictions on the technical validity dates of the eff_dttm and exp_dttm records and the deleted_ind logical delete flag are set by default.

When changes were made to the code, the question arose - who will fill the metadata file? There are a lot of entities in the repository; it is unprofitable to register all the connections yourself. In the end, I decided to hang this task on my fellow analysts. I uploaded the metadata file in svn, from where checkouts are done in a local directory with the program. The principle is this: a new entity has appeared in the repository? One analyst makes possible joins to a file, commits changes, the rest do checkouts to themselves and enjoy working autocomplete: community, knowledge accumulation and all that. Held a workshop for colleagues on the use of the program, wrote an article in confluence - now the company has more than one convenient tool.

Work on this feature gave me an understanding that one should not be afraid to pick open source projects - as a rule, they have a clear architecture, and even basic knowledge of the language will be enough for experiments. And with a certain degree of perseverance, you can even get rid of the hated routine operations, saving yourself time for new experiments.