Hello, Habr! I present to you the translation of the article

"Service mesh data plane vs control plane" by

Matt Klein .

This time I wanted and translated the description of both components of the service mesh, data plane and control plane. This description seemed to me the most understandable and interesting, and most importantly leading to the understanding "Is it even necessary?"

As the idea of a “Service mesh” has become more and more popular over the past two years (Original article October 10, 2017) and the number of participants in space has increased, I have seen a commensurate increase in confusion among the entire technical community regarding how to compare and contrast different solutions.

The situation is best described by the following series of tweets that I wrote in July:

Confusion with the service network (service mesh) No. 1: Linkerd ~ = Nginx ~ = Haproxy ~ = Envoy. None of them are equal to Istio. Istio is something completely different. one /

The first are just data planes. They themselves do nothing. They need to be tuned for something more. 2 /

Istio is an example of a control plane that links parts together. This is a different layer. /end

Previous tweets mentioned several different projects (Linkerd, NGINX, HAProxy, Envoy and Istio), but, more importantly, introduced the general concepts of a data plane, a service mesh and a control plane. In this post I will take a step back and tell what I mean by the terms “data plane” and “control plane” at a very high level, and then I will tell how the terms relate to the projects mentioned in tweets.

What is a service mesh (really)?

Figure 1: Service mesh overviewFigure 1

Figure 1: Service mesh overviewFigure 1 illustrates the concept of a service mesh at the most basic level. There are four service clusters (AD). Each service instance is associated with a local proxy server. All network traffic (HTTP, REST, gRPC, Redis, etc.) from a single application instance is transmitted through a local proxy server to the corresponding external service clusters. Thus, the application instance does not know about the network as a whole and only knows about its local proxy. In fact, the network of the distributed system was remote from the service.

Data plane

In the service mesh (service mesh), a proxy server located locally for the application performs the following tasks:

- Service discovery What services / services / applications are available for your application?

- Health Check Are service instances returned by service discovery operational and ready to accept network traffic? This can include either an active (e.g., checking the / healthcheck response) or passive (e.g., using 3 consecutive 5xx errors as an indication of the unhealthy state of the service) health checks.

- Routing Having received a request to "/ foo" from the REST service, to which service cluster should the request be sent?

- Load balancing After a service cluster was selected during routing, to which instance of the service should the request be sent? What timeout? What settings for circuit breaking? If the request fails, should it be repeated?

- Authentication and authorization . For incoming requests, can the calling service be cryptographically recognized / authorized using mTLS or some other mechanism? If it is identified / authorized, is it allowed to call the requested operation (endpoint) in the service or should an unauthenticated response be returned?

- Observability For each request, detailed statistics, logs / logs, and distributed trace data should be generated so that operators can understand the distributed traffic flow and debugging problems as they arise.

For all the previous items in the service network (service mesh), the data plane is responsible. In fact, the proxy local to the service (sidecar) is a data plane. In other words, the data plane is responsible for conditional translation, forwarding and monitoring of each network packet that is sent to or sent from the service.

The control plane

The network abstraction that the local proxy in the data plane provides is magical (?). However, how does the proxy actually know about the "/ foo" route to service B? How can service discovery data that is populated with proxy requests be used? How are load balancing, timeout, circuit breaking, etc. configured? How to deploy the application using the blue / green (blue / green) method or the method of gradual transfer of traffic? Who configures system-wide authentication and authorization?

All of the above items are managed by the control plane of the service mesh.

The control plane accepts a set of stateless isolated proxy servers and turns them into a distributed system .

I think the reason many technologists find the separated concepts of the data plane and control plane confused is because for most people the data plane is familiar, while the control plane is foreign / incomprehensible. We have been working with physical network routers and switches for a long time. We understand that packages / requests must go from point A to point B, and that we can use hardware and software for this. The new generation of software proxies is simply the trendy versions of the tools that we have used for a long time.

Figure 2: Human control plane

Figure 2: Human control planeHowever, we have long used a control plane, although most network operators may not associate this part of the system with any technological component. The reason is simple:

Most of the control planes used today are ... us .

Figure 2 shows what I call the "Human control plane." In this type of deployment, which is still very common, the human operator, probably grumpy, creates static configurations - potentially using scripts - and deploys them using some kind of special process on all proxies. Then the proxies begin to use this configuration and begin processing the data plane using the updated settings.

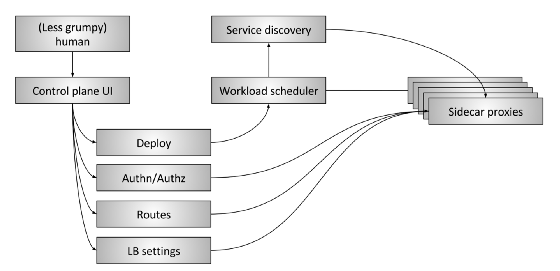

Figure 3: Advanced service mesh control planeFigure 3

Figure 3: Advanced service mesh control planeFigure 3 shows the “extended” control plane of the service mesh. It consists of the following parts:

- The Human : There is still a person (hopefully less angry) who makes high-level decisions regarding the entire system.

- Control plane UI : A person interacts with some type of user interface to control the system. It can be a web portal, a command line application (CLI), or some other interface. Using the user interface, the operator has access to such global system configuration parameters as:

- Deployment management, blue / green and / or gradual traffic transfer

- Authentication and Authorization Settings

- Routing table specifications, for example, when application A requests information about "/ foo", what happens

- Load balancer settings, such as timeouts, retries, circuit breaking parameters, etc.

- Workload scheduler : Services are launched in the infrastructure through a certain type of planning / orchestration system, such as Kubernetes or Nomad. The scheduler is responsible for loading the service along with its local proxy server.

- Service discovery When the scheduler starts and stops service instances, it reports the health status to the service discovery system.

- Sidecar proxy configuration APIs : Local proxies dynamically extract state from various components of the system according to the “eventually consistent” model without operator intervention. The entire system, consisting of all currently running instances of services and local proxy servers, ultimately converges into one ecosystem. The Envoy data plane API is one example of how this works in practice.

In essence, the goal of the control plane is to establish a policy that will ultimately be adopted by the data plane. More advanced control planes will remove more parts of some systems from the operator and require less manual control, provided that they work correctly! ..

Data plane and control plane. Data plane vs. control plane summary

- Service mesh data plane : affects every packet / request in the system. Responsible for application / service discovery, health checks, routing, load balancing, authentication / authorization, and observability.

- Service mesh control plane : provides policy and configuration for all working data planes within the service network. Does not touch any packets / requests in the system. The control plane turns all data planes into a distributed system.

Current project landscape

Having figured out the explanation above, let's look at the current status of the “service mesh” project.

- Data planes : Linkerd, NGINX, HAProxy, Envoy, Traefik

- Control planes : Istio, Nelson, SmartStack

Instead of conducting an in-depth analysis of each of the above solutions, I will briefly dwell on some points that, in my opinion, cause most of the confusion in the ecosystem right now.

In early 2016, Linkerd was one of the first proxy servers for the data plane for the service mesh and did a fantastic job of raising awareness and increasing attention to the service mesh design model. About 6 months after this, Envoy joined Linkerd (although he has been with Lyft since the end of 2015). Linkerd and Envoy are two of the projects that are most often mentioned when discussing service networks.

Istio was announced in May 2017. The goals of the Istio project are very similar to the extended control plane shown in

Figure 3 . Envoy for Istio is the default proxy server. Thus, Istio is the control plane, and Envoy is the data plane. In a short time, Istio caused a lot of unrest, and other data planes started integrating as a replacement for Envoy (both Linkerd and NGINX demonstrated integration with Istio). The fact that you can use different data planes in the same control plane means that the control plane and the data plane are not necessarily closely related. An API such as the Envoy universal data plane API can form a bridge between two parts of the system.

Nelson and SmartStack help further illustrate the separation of the control plane and the data plane. Nelson uses Envoy as its proxy and builds a reliable control plane of the service mesh based on the HashiCorp stack, i.e. Nomad etc. SmartStack is perhaps the first of a new wave of service networks. SmartStack forms a control plane around a HAProxy or NGINX, demonstrating the ability to decouple a control plane from a service mesh and a data plane.

A microservice architecture with a service mesh is attracting more attention (right!), And more and more projects and vendors are starting to work in this direction. Over the next few years, we will see many innovations in both the data plane and the control plane, as well as further mixing of the various components. Ultimately, the microservice architecture should become more transparent and magical (?) For the operator.

Hope less and less annoyed.

Key points (Key takeaways)

- A service mesh (service mesh) consists of two different parts: a data plane and a control plane. Both components are required, and without them the system will not work.

- Everyone is familiar with the control plane, and right now you can be the control plane!

- All data planes compete in functions, performance, configurability, and extensibility.

- All control planes compete in function, configurability, extensibility, and usability.

- A single control plane can contain the correct abstractions and APIs so that multiple data planes can be used.